Methodologies are best understood as instruments or frameworks to achieve specific objectives, not as ideologies requiring blind faith.

When tools replace thinking, instead of augmenting it, innovation quietly fails

Over the past decades, organisations have enthusiastically adopted methodologies designed to help them innovate, adapt, and move faster. Systems Thinking, Futures Thinking, Design Thinking, JTBD, Lean, Agile (to name just a few), each arrived promising clarity in complexity. And each delivered real value.

Yet somewhere along the way, a subtle but dangerous shift occurred: methodologies stopped being tools and started becoming ideologies.

What began as helpful frameworks gradually turned into default answers. Instead of sharpening judgment, they began to replace it. Instead of supporting thinking, they started to precede it.

This is the trap of methodologies: falling so deeply in love with a framework that we stop questioning whether it actually fits the problem in front of us.

Together with my colleagues at HorizonX, this has become part of our mission: to support organisations in better understanding the challenges they face and in choosing the right methodology – or mix of methodologies – so they avoid falling into this trap.

When the map becomes the territory

Methodologies are tools, not truths. They provide structure, shared language, and momentum in uncertain situations. But they are only maps, not the territory. They should be used as flexible guides, not to be considered rigid dogmas.

Problems are not uniform. Some are predictable and repeatable; others are complex, ambiguous, or novel. Applying the same methodology indiscriminately replaces judgment with process or ritual. Artefacts replace insights. Ceremonies replace decisions. Activity is confused with progress.

Used well, a methodology sharpens thinking and accelerates outcomes. Used poorly, it becomes a substitute for leadership and responsibility.

Why we fall into the methodology trap?

The trap is understandable. Methodologies offer comfort in environments filled with ambiguity. They:

- Reduce cognitive load

- Create shared language

- Provide a sense of progress

- Offer legitimacy (“this is best practice”)

They also come with ecosystems: certifications, toolkits, consultants, and success stories. Over time, questioning the method can feel almost heretical.

And so, instead of asking “What kind of problem are we dealing with?”, teams ask “Which methodology should we use?”. That is already the wrong question to start with, and where often the problem begins.

Many failures are diagnostic failures

Over the years, working with organisations across industries and geographies, I have heard the same complaints repeatedly, about nearly every popular methodology: some are seen as too superficial, others as too slow, some simply fail to deliver the expected outcomes.

The conclusion I gradually reached is this: the problem is rarely the methodology itself.

Most failures arise from:

- Using a methodology that does not match the nature of the problem

- Failing to adapt methods to organisational culture, maturity, or geography

- Introducing tools without preparing the organization to absorb them

- Consultants falling in love with a preferred method and forcing it onto the situation

In other words, many failures are not execution failures, they are diagnosis failures.

A personal confession

I must plead guilty. Over the years, I too have had my crushes and fallen into this trap. I fell in love with one methodology, then another, grew attached to the certainty it offered, and tried to make it solve problems it was never designed to solve.

Early in our careers, this kind of infatuation is perhaps inevitable — just as it is in our personal lives. We cling to what gives us confidence, language, and a sense of direction.

But professional maturity (much like personal maturity) requires a different kind of relationship. We move beyond the crush into a more grounded form of “love”: something much deeper and more meaningful. A relationship based on deep listening, patience, contextual understanding, and discipline. The discipline to choose not what is most familiar or comfortable for us, but what is genuinely valuable for those we aim to support — what allows them, and the systems they inhabit, to genuinely thrive.

Diagnosis before prescription

Before comparing methodologies, one principle matters more than any framework. Methods must match the nature of the problem.

- Clear problems require efficiency

- Complicated problems require expertise

- Complex problems require exploration and learning

- Uncertain futures require perspective, not precision

In most of the cases when methodologies fail, it is not because they are flawed, but because they are applied in the wrong context.

Over the years, working on various projects alongside my colleagues at DesignThinkers Academy , Ascent Group and with Dr. Robert Collins — with whom I share a deep passion for tools and frameworks, and from whom I have learned a lot — I came to realise that the starting point of every project should be an in-depth diagnosis of the problem we are trying to solve.

My passion for this topic made me gradually realise that this approach could be taken even further, by incorporating additional, often overlooked variables — such as an organization’s time horizon, level of ambition (optimisation versus transformation), and organisational readiness. Are we trying to solve a problem for the immediate present, or are we designing a solution meant to endure? Are we seeking a quick fix, a meaningful improvement, or true innovation?

These reflections have shaped our recent work at HorizonX, where we are developing a diagnosis tool intended to serve as a compass for organisational decision-making, helping teams more accurately assess which tools or frameworks are most appropriate for the challenges they face.

With that framing in mind, let’s examine some of the most commonly used methodologies, moving deliberately from exploratory to operational, and transparently highlighting where each excels — and where it breaks down.

This part of the article is not intended for experienced practitioners of these methods, but rather for those seeking a clear, high-level overview of the most commonly used frameworks in the innovation and continuous improvement space.

- Systems Thinking – A way of understanding how parts of a system interact and influence each other over time, best used for navigating complex, interconnected challenges where actions have ripple effects.

- Futures Thinking / Foresight – A structured approach to exploring multiple plausible futures and challenging assumptions, best used for long-term strategy and preparing for uncertainty.

- Design Thinking – A human-centred approach to problem-solving that emphasises empathy, ideation, and prototyping, best used for uncovering user needs and designing innovative solutions to ambiguous or “wicked” problems.

- Jobs To Be Done (JTBD) – A framework for understanding why people “hire” products or services to make progress in specific situations, best used for product discovery, prioritisation, and aligning teams around real customer motivations.

- Lean Six Sigma – A data-driven methodology for optimising processes, reducing waste, and improving quality, best used for operational excellence and efficiency in well-defined processes.

- Agile – An iterative, flexible approach to delivering work through small, incremental cycles with continuous feedback, best used for adaptive execution and fast delivery in complex, changing environments.

- Lean Start-Up – A methodology for testing hypotheses quickly through iterative experiments and minimum viable products, best used in early-stage innovation to reduce risk and validate ideas before scaling.

For those interested in learning more, here’s a more in-depth analysis of each methodology, highlighting their best uses and main challenges.

Systems Thinking

Systems Thinking helps you see how everything in a system connects and influences each other, so you can make smarter decisions, that actually stick. It enables more informed and sustainable decisions, rather than isolated fixes.

What it is best at:

- Systems Thinking shifts attention from isolated events to underlying structures, relationships, and feedback loops. It helps teams understand how parts interact and why local fixes often create new problems elsewhere.

- It is particularly effective in environments where cause and effect are non-linear and delayed: supply chains, large organisations, ecosystems, public policy, or societal change.

- By making feedback loops explicit, it helps decision-makers anticipate second- and third-order consequences before acting.

- Rather than optimising individual components, it connects short-term actions to long-term outcomes and promotes learning over control.

Where it struggles:

- Insights often remain abstract. Translating system maps into concrete, actionable steps can be difficult for teams seeking immediate answers.

- Building meaningful system models takes time, data, and facilitation skill, which can feel slow in execution-driven environments.

- The sheer visibility of complexity can overwhelm stakeholders, creating the illusion that problems are too large to influence.

- Systems Thinking is also poorly suited for urgent, well-defined problems where speed and precision matter more than holistic understanding.

Bottom line

Systems Thinking is a powerful lens for understanding complexity and long-term impact, but it is not a delivery methodology.

Its trap lies in mistaking understanding for action: seeing the system clearly, yet failing to decide where and how to intervene.

Futures Thinking / Foresight

While Systems Thinking helps us understand how the current system works, Futures Thinking helps us explore how that system might change and what that means for decisions today.

What it is best at:

- Futures Thinking deliberately expands the time horizon, helping organisations look beyond short-term pressures and consider long-term forces, uncertainties, and structural shifts.

- Rather than predicting a single outcome, it explores multiple plausible futures through alternative scenarios and narratives.

- By challenging what is taken for granted today, it exposes assumptions about markets, technologies, behaviours, and power structures that may no longer hold tomorrow.

- Its greatest strength lies in supporting resilient strategy, strategies designed to remain viable across multiple futures rather than optimised for one expected path.

Where it struggles:

- Foresight outputs are often abstract: narratives, scenarios, and themes that can feel distant from immediate decisions.

- Meaningful foresight requires sustained scanning, synthesis, and facilitation, an investment that often clashes with short-term performance pressures.

- Organisations seeking certainty or predictions often struggle with probabilistic thinking and ambiguity.

- Without strong strategic follow-through, foresight risks remaining an intellectual exercise disconnected from real decisions.

Bottom line

Futures Thinking creates value by expanding perspective and shaping long-term intent, not by predicting outcomes.

Its trap appears when scenarios remain elegant stories with no consequence. Foresight only matters when it informs priorities, investments, and choices.

Design Thinking

Design Thinking reframes problems around real human needs and challenges hidden assumptions through empathy and experimentation.

What it is best at:

- Human-centred innovation. It is deeply rooted in the needs of the people for whom you design, being a human-centred methodology, having empathy as foundation.

- It excels in situations where problems are ambiguous and poorly understood. Great for “wicked problems” where the solution isn’t obvious.

- It encourages cross-functional collaboration, reframing, ideation and rapid experimentation through low-fidelity and iterative prototyping.

- Helps test ideas early and cheaply.

- Design Thinking is particularly powerful at uncovering unmet needs and shifting perspectives early in the innovation process.

Where it struggles:

- Design Thinking often remains user-centric, but strategy-light. It tends to focus on what users want today, while underplaying long-term consequences, system constraints, or business viability.

- Speed and efficiency: its iterative nature can slow decision-making in time-critical contexts, and teams may struggle to scale insights into execution.

- Scalability: easy to get stuck in small experiments without moving to business implementation.

- Data-driven decisions: less suited for markets where quantitative metrics or competitive analysis are primary.

Bottom line

Design Thinking is powerful for human-centred design, fostering empathy, deep understanding of user needs, discovery, and creativity.

Its trap lies in assuming that what users need automatically aligns with what organisations should or can do, and that business strategies can be built solely on current user needs.

Jobs To Be Done (JTBD)

JTBD focuses on why people “hire” a product or service to make progress in a specific situation (to get a job done). It offers a sharp lens on why people choose one solution over another.

What it is best at:

- Discovering real customer motivation: very accurate on understanding why people choose, switch, or abandon solutions.

- Early-stage product discovery: very good at identifying unmet needs and opportunity spaces.

- Innovation-friendly: Reveals unmet jobs and constraints, guiding better product ideas.

- It supports early-stage product discovery, prioritisation, and cross-functional alignment through a shared language of outcomes.

- Its rigour helps teams focus on what truly drives choice and switching behaviour.

Where it struggles:

- JTBD research is demanding. Requires high-quality interviews and synthesis. Poor interviews or shallow synthesis can quickly undermine its value.

- Risk of oversimplification: May underplay emotional, social, or brand factors if applied narrowly.

- Execution gap: Defining the job does not automatically reveal the solution, particularly when innovation aims to create entirely new forms of value. Translating jobs into concrete features and metrics can be challenging

- Not a complete framework: Needs to be combined with UX, ideation techniques and business analysis.

- Time-consuming: It requires quite intensive time to apply and the learning process (in case you want to build internal capabilities) is quite long and complex)

Bottom line

JTBD is powerful for understanding customer motivation and driving innovation, but it demands strong research skills and works best alongside other product methods.

The trap with JTBD is reductionism: assuming that once the “job” is defined, the solution naturally follows. Also, not all innovation is about fulfilling existing jobs, especially from a long-term perspective, some create entirely new ones.

Lean Six Sigma

Lean Six Sigma excels at optimising known processes through data, rigour, and discipline. Lean thinking helped organisations eliminate waste, streamline operations, and improve quality. Its impact on operational excellence is undeniable.

What it is best at:

- Continuous improvement focus and data-driven – excels at optimising efficiency, reducing waste, and improving quality; relies on metrics and statistical analysis to identify and reduce variation and delivers measurable efficiency gains.

- Its structured approach DMAIC (Define, Measure, Analyse, Improve, Control) provides a clear roadmap and supports repeatability, consistency, and operational excellence.

- Cost and time savings – often delivers measurable ROI by reducing defects and inefficiencies.

- Standardisation and consistency – builds repeatable, predictable processes.

- Problem-solving rigour – encourages root-cause analysis and disciplined decision-making.

Where it struggles:

- Less innovation-focused – primarily improves existing processes rather than creating new products or markets..

- Can be rigid – heavy reliance on data and structure may stifle creativity or flexibility.

- Applied too early, it can efficiently optimise the wrong thing.

- Resource-intensive – requires trained practitioners (Green/Black Belts) and time to execute projects.

- Limited in ambiguous or ill-defined problems – struggles when problems aren’t easily measurable.

- Cultural resistance – implementing LSS often requires organisational buy-in and cultural change.

Bottom line

Lean Six Sigma excels at process optimisation and quality improvement but is weaker for strategic innovation or ambiguous, emergent problems.

Agile

Agile optimises delivery in complex development environments. But when we talk about Agile as a concept, we must remember that it is essentially a simple set of values and principles defined in a manifesto. On its own, it is not directly usable as an execution approach.

Therefore, people developed concrete Agile methodologies from it. The most well-known and widely applied, especially beyond software, is Scrum.

What it is best at:

- Iterative and flexible – delivers value in small increments, adapts quickly to change.

- Customer-focused and business driven development – continuous feedback ensures the product meets user needs.

- Fast delivery – prioritises high-value features and quick releases.

- Transparency and visibility – regular stand-ups, reviews, and retrospectives keep progress clear.

Where it struggles:

- Agile assumes direction already exists. Without strategic clarity, teams may move quickly without meaningful progress.

- Less strategic focus. Due to its limited scope, it does not address strategy, portfolio management, technology roadmaps, C-level engagement, or other topics that are, by definition, enterprise-wide.

- Can lead to scope creep – flexibility without discipline can dilute focus. Requires mature teams – teams must be self-organising and accountable.

- Scrum being originally designed as a software product development method, when is used as a product development method in regulated or complex environments (such as mechanical engineering, for example), might be inefficient, as the product cannot be created iteratively, in small increments.

- Dependent on stakeholder engagement – frequent feedback cycles need active participation.

- Teams working on different time-zones, which makes the stand-ups and scrum rituals challenging

Bottom line

Agile excels at rapid delivery, adaptability, and customer responsiveness, but it is less suited for long-term strategy, heavily regulated environments, or non-collaborative teams.

The common Agile trap is confusing activity with progress. Teams deliver faster, sprint after sprint, without ever stopping to ask whether they are heading in the right direction. Agile optimises how we move — not where we are going.

Also, because its focus is product development, it does not address core project management areas such as budgeting, change management, stakeholder management, or risk management.

Lean Start-Up

Lean Start-Up is designed for learning under uncertainty, before scaling execution. If Agile optimises how we deliver, Lean Start-Up tests whether what we are building should exist at all.

What it is best at:

- Reducing risk under uncertainty: Lean Start-Up is designed for situations where assumptions are high and evidence is low: new products, new markets, or new business models.

- Testing hypotheses quickly: It helps teams test risky assumptions through fast, evidence-based experiments.

- Resource efficiency in early stages: MVPs reduce over-investment while accelerating learning; it helps teams learn faster and fail cheaper.

- Its Build–Measure–Learn loop replaces opinion-driven debates with empirical insight.

- Its focus is not on building faster, but on learning whether customers actually care — and why.

Where it struggles:

- Limited strategic scope: Lean Start-Up tests assumptions, but does not define long-term vision, positioning, or competitive advantage.

- Shallow understanding risk: Poorly designed experiments or superficial customer feedback can create false confidence.

- Not suited for optimisation: Once a product or process is stable and well understood, Lean Start-Up adds little value.

- Cultural resistance: Organisations uncomfortable with uncertainty, failure, or experimentation often undermine its intent.

- Can be mistaken for speed: Running experiments quickly is not the same as learning meaningfully.

Bottom line

Lean Start-Up prevents organisations from efficiently building the wrong thing. It is a powerful method for learning what matters before scaling execution.

Its trap lies in mistaking experimentation for strategy — or in continuing to “test” long after direction should be set.

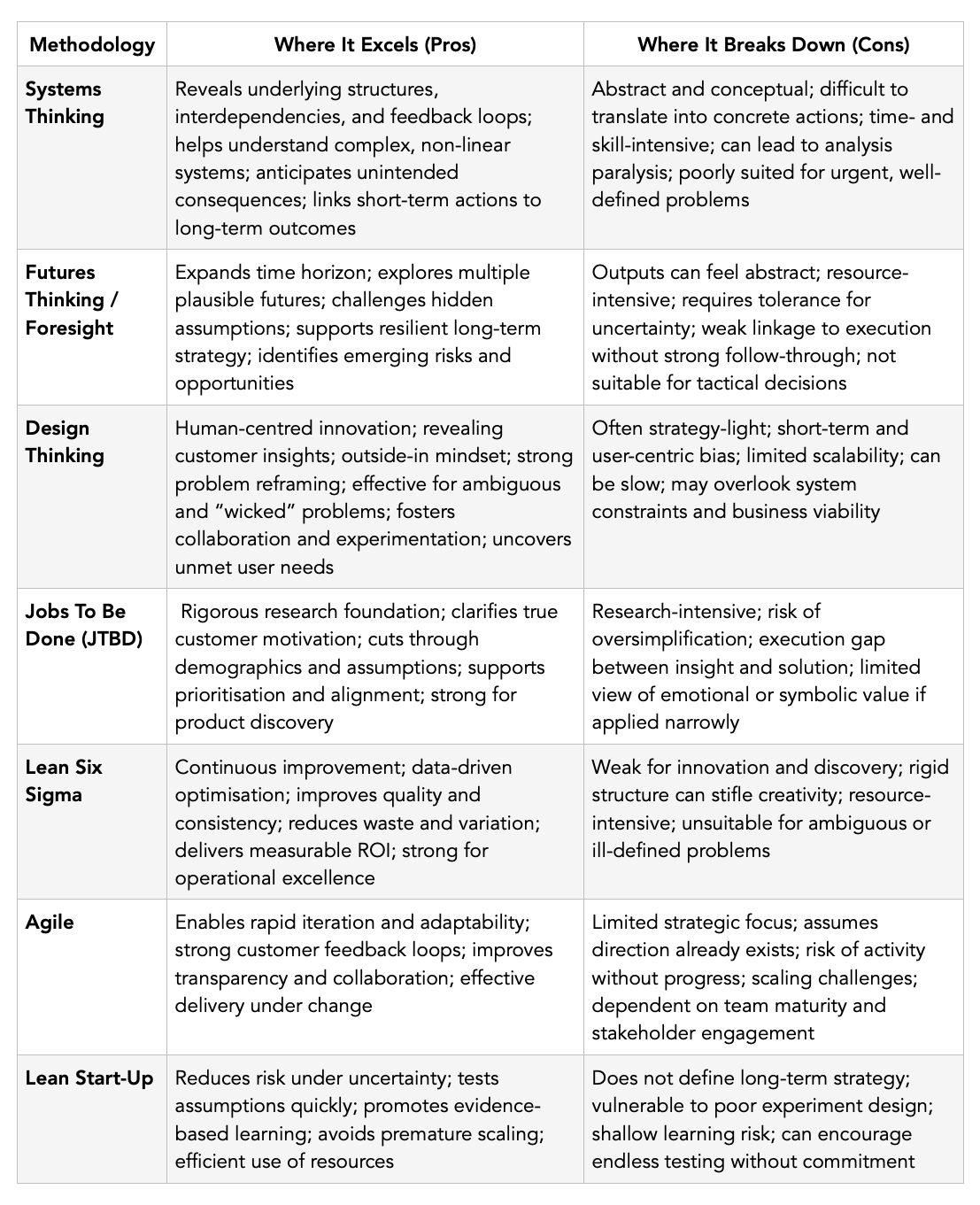

For anyone who wants the quick version, here’s a snapshot of each methodology’s key strengths and limitations at a glance.

Methodologies at a Glance: Strengths and Limitations

The real issue: methodological absolutism

As you can see, no methodology is universally right, no framework works in all contexts.

The real danger lies in methodological absolutism, the belief that one approach is inherently superior, regardless of the situation.

As consultants, none of us can be an expert in every methodology, but my deep belief is that as long as we operate in this field, we should all develop a foundational level of generalism, exposing ourselves, as part of our learning, to a wide range of tools and frameworks. This will helps us judge what is most appropriate in each context, use the right approach or and turn to colleagues who truly master a method when it falls outside our expertise.

Last but not least, we should increasingly operate in multidisciplinary teams, valuing each other’s competencies and recognising that every discipline requires years of study and practice to truly master.

In my opinion, mature organisations and mature consultants do not “implement’ methodologies. They compose them. They borrow, adapt, combine, and abandon methods when necessary. Because the value is not in the method itself, but in the decisions and outcomes it enables.

A closing reflection

Methodologies are powerful, but blind without context. When methods come before thinking, organisations get busy; when thinking comes first, methods truly serve and organisations can truly flourish.

And sometimes, the most innovative move isn’t picking a new framework, but pausing to see the system you are already part of – its culture, mindsets, ambitions, and capabilities – and choosing the response that genuinely fits.

The good news? At HorizonX, we are building a diagnosis tool to support this process: a compass that will help organisations better understand the challenges they face and identify the frameworks, tools, or combinations that will work in their real context — not just in theory, but in practice. We will help them choose what fits — not what is fashionable. In some cases we might even create a custom, company-specific framework, if needed. Because the value is not in the method, it’s in the decisions and outcomes it enables.

We will be publishing more details about this tool in the coming weeks, as we truly believe it can guide decision-making and make life easier for many organisations. And this is at the heart of our mission.